SERP Profiler Kit: Python Tools for SERP Data Collection and Data Acquisition and Analysis Scripts

1 min read

SERP Profiler Kit

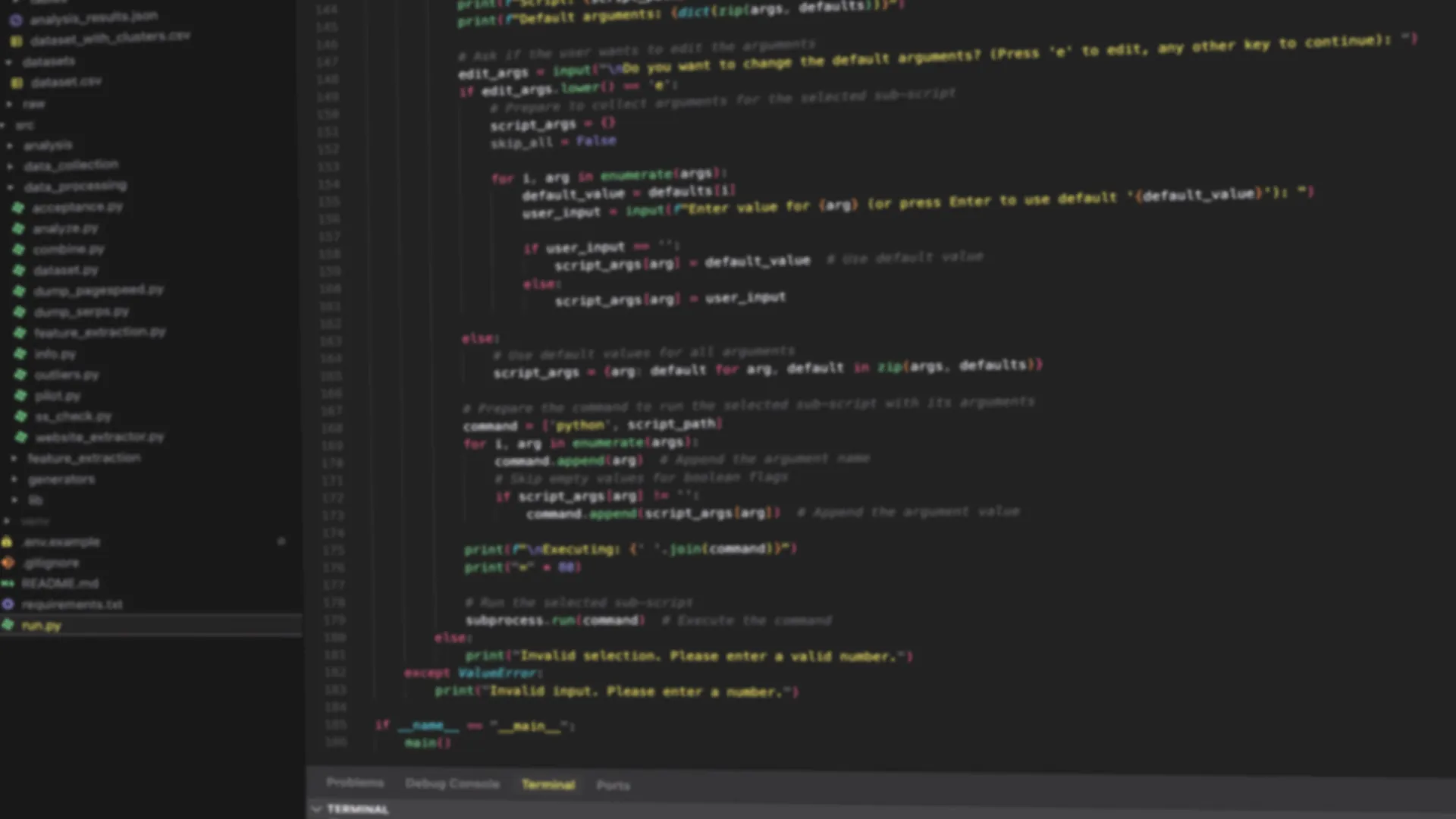

SERP Profiler Kit is a research-oriented toolkit designed to collect, process, and analyze search engine results pages (SERPs) and to generate reproducible, publication-ready research datasets and outputs.

TL;DR (Which version should you use?)

v1 (Published / frozen): The code used in our IEEE Access paper is preserved as tag

v1.0.0and branchv1.

Paper: https://ieeexplore.ieee.org/document/11363468

Repository: https://github.com/gokerDev/serp-profiler-kit (see tagv1.0.0or branchv1)v2 (Ongoing work): The new pipeline for our next study is developed in the same repository.

Use the repository’s current default branch and follow the updated documentation there.

For reproducibility and citation, prefer a tag (e.g.,

v1.0.0) or a commit hash over a moving branch.

What the toolkit provides

Core capabilities include:

- SERP collection (study-dependent; APIs and/or controlled scraping workflows)

- Artifact reconciliation and indexing (deduplication, normalization, run metadata)

- Feature extraction (technical signals, content/semantic signals, accessibility, runtime metrics)

- Statistical analysis and reporting outputs (tables/figures for publication)

Repository

The repository link below is kept current:

git clone git@github.com:gokerDev/serp-profiler-kit.git

https://github.com/gokerDev/serp-profiler-kit

Check publications for cite details. V1 Artile is Disentangling Technical and Content Attributes in Search Engine Ranking: A Comparative Study of Google and Bing

Check datasets for datasets.