MATCH: Deterministic, Local-First Website Quality Checks — Simple by Design, Extensible by Architecture

6 min read

Extensible by architecture. Built for real-world and academic workflows.

Web quality testing is often loud.

Even when you use reputable tools, the output is frequently a mix of:

- boolean checks (pass/fail),

- “higher is better” scores,

- “lower is better” values,

- raw metrics with no normalization,

- and results that only make sense if you already know the measurement’s nature.

MATCH exists to remove that noise.

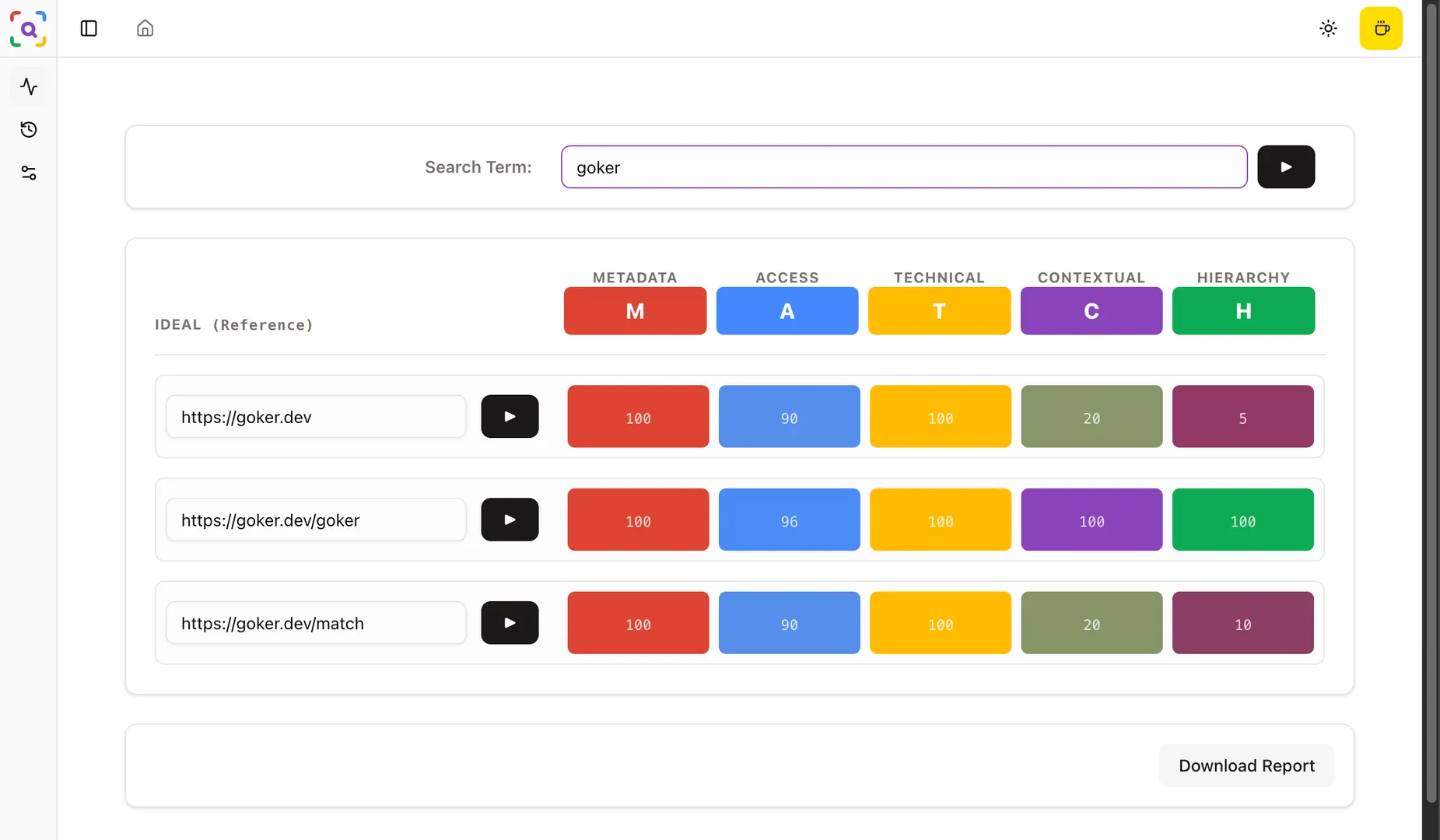

It is a local-first, deterministic website quality linter that evaluates a page through a small set of core pillars and displays the outcome as a simple heatmap—a “control panel” you can trust and interpret quickly.

The core function: a reference-based quality control card

MATCH is designed around a very concrete mental model:

a control card with five columns, where each column turns complex measurement into a clear, comparable signal.

Those five pillars are:

M — Metadata Precision

Critical metadata correctness and consistency (e.g., title/meta expectations and precision).A — Access Quality

Deterministic accessibility baseline checks (powered by axe-core).T — Technical Hygiene

Engineering hygiene signals: runtime errors, console issues, technical red flags.C — Contextual Relevancy

Content alignment with a Search Term, using local similarity scoring (Transformers-based embeddings).H — Hierarchy Integrity

Structural and semantic integrity: headings/landmarks/main/article patterns and hierarchy sanity.

The key point is not “more metrics.”

The key point is consistent interpretation.

Why “simple” is a hard requirement

MATCH intentionally avoids becoming another dashboard that tries to be everything.

It focuses on a narrow job:

- Extract signals from the page

- Evaluate them against stable references

- Normalize the outcomes

- Present them as a readable 5-column heatmap

- Export results as JSON for reuse

That simplicity is not minimalism for aesthetics—it is operational.

It means you can run it repeatedly, compare results, and make decisions fast.

The biggest problem in test results: mixed metric types

…and how MATCH solves it with metric-level normalization

When working with quality signals, the most common interpretation failure looks like this:

- Metric A is boolean (true/false)

- Metric B is “higher is better”

- Metric C is “lower is better”

- Metric D is a raw number that requires domain knowledge

- Metric E is normalized, but on a different scale

MATCH resolves this by design:

Each metric normalizes within itself before it becomes part of the heatmap.

Meaning:

- “good/bad/needs attention” is consistently expressed,

- comparisons become meaningful,

- and the user is not required to learn the “physics” of every measurement to interpret the report.

This is the difference between “a pile of numbers” and “a control card.”

Plugin-based metric architecture

Stable engine, evolving metric set

Some tools ship with a fixed set of metrics you cannot change.

That approach is convenient, but it limits experimentation and domain-specific workflows.

MATCH takes a different path:

- the engine remains stable,

- metrics are plugins that can be added/removed/adjusted.

This architecture matters because it enables:

- tailoring checks to a specific product domain,

- running research-driven measurements,

- and evolving the system without rewriting the core.

Dependency-safe execution

Metrics can depend on other metrics or extracted signals.

To guarantee deterministic execution, MATCH builds an ordered plan using topological sorting during the build process.

In practice today:

- you add/remove a metric under the

metrics/folder, - and the build script generates the correct, dependency-safe execution order.

This keeps results stable and prevents “it worked yesterday” chaos.

Free and open-source — by principle, not by marketing

MATCH is:

- free

- open-source

- local-first

- no accounts

- no premium

- no payments

- no remote API required

The intent is clear:

this is not a SaaS funnel. It is a developer tool.

Privacy and security are natural outcomes of this design:

- analysis stays on the device,

- no browsing history is collected,

- page content is not sent to a server.

Academic workflows: where MATCH becomes a multiplier

If you publish, crawl, or study the web at scale, you already know the reality:

- extraction is noisy,

- bot protection and CAPTCHA add friction,

- crawling pipelines generate huge amounts of “process noise” before you even reach meaningful measurements.

MATCH is useful here because it compresses interpretation.

Instead of collecting a hundred raw signals and then trying to remember what each means:

- MATCH yields a normalized heatmap

- backed by a machine-readable JSON report

Practical academic use-cases

Quality baselining during crawling

Use MATCH outputs as a quality gate: which pages are structurally broken, inaccessible, or technically unstable?Dataset annotation support

When labeling pages, the heatmap becomes a quick “sanity layer” before deeper manual review.Comparative studies

MATCH can already provide comparative results for up to 3 URLs, enabling small-scale side-by-side evaluation.Relevancy experiments

With Search Term similarity, you can test whether a page’s content truly aligns with an intended query, useful for IR-style research setups.

Where MATCH is today

- Runs as a Chrome Extension (Manifest V3)

- Supports a single-page scan and dashboard-based multi-URL analysis

- Produces a heatmap result

- Exports JSON reports

- Supports up to 3-URL comparison

- Provides Search Term ↔ content similarity via local embeddings

Customization today is already possible, but requires:

- cloning the repository,

- modifying metrics,

- building the extension,

- and loading it as an unpacked extension.

That is a deliberate tradeoff for early-stage velocity.

What’s coming in V2

Customization, reproducibility, bulk workflows

V2 focuses on turning today’s developer-friendly foundation into a user-configurable system.

1) Registry-based metrics + configurable metric sets

Instead of editing source code to customize checks:

- metrics will be discovered via a registry,

- enabled/disabled via config,

- and parameterized without patching the repository.

2) Config export = reproducible analyses

A report should not exist alone.

In V2:

- the config used to generate the report can be exported and published alongside it,

- enabling reproducibility across machines and teams,

- and making academic methodology easier to document.

3) Bulk crawling compatibility

MATCH’s architecture will be shaped to support bulk workflows:

- consistent execution,

- stable exports,

- and scalable orchestration for crawling pipelines.

The goal is not to replace your crawler—

it is to make quality evaluation a reliable component within it.

4) Broader browser support

V2 is planned to expand compatibility beyond Chrome, reducing platform lock-in.

5) AI-assisted interpretation (without betraying local-first)

One major future direction is to help users interpret results faster:

- summarizing why a pillar failed,

- suggesting high-confidence fixes,

- producing developer-friendly explanations.

But the guiding constraint remains:

no remote API dependency by default.

Closing: a quality tool that respects engineering reality

MATCH is not trying to be the loudest tool.

It is trying to be the clearest one.

- Simple output

- Deterministic execution

- Metric-level normalization

- Plugin-driven extensibility

- Free and open-source

- Academic-friendly exports

- A clear V2 path toward reproducible, configurable, bulk analysis

If your workflow involves web quality checks—whether for product engineering, SEO hygiene, accessibility baselines, or research-scale crawling—MATCH is built to become a reliable, low-noise component you can trust.

Try it / Customize it

Today, customization requires editing the source and loading the extension unpacked in Chrome.

V2 aims to make customization config-driven and exportable.

If you want to contribute, propose metrics, or shape the V2 registry/config system, the project is open to collaboration.

https://github.com/gokerDEV/match

git clone git@github.com:gokerDEV/match.git